Deep Learning with Python

9.2 The limitations of deep learning

F. Chollet氏の"The Measure of Intelligence"は、非常に難しい。

F. Chollet氏のテキスト、"Deep Learning with Python"の9章が、"The Measure of Intelligence"の序章になっているように思う。

9.1 Key concepts in review

5月6日:今日は、ここを勉強して弱点(RNN、多入力多出力など)を補強しよう。

9.1.1 Various approaches to AI

machine learningは、一般常識+α程度かもしれない。

A. GeronのMachine Learning with Scikit-Learn, Keras & TensorFlowのChapter 7 Ensemble Learning and Random Forestsをちょっとだけ眺めてみよう。

使うのは、moons dataset (binary classificationのためのtoy dataset)で、X_train, y_trainとして定義済みとする。

以下は雰囲気を伝えるためにテキストのコードからの部分的コピペによる概略

from sklearn.ensemble import RandomForestClassification

以下同様で、VotingClassifier, LogisticRegression, SVCをimportする。

log_clf = LogisticRegression( )

rnd_clf = RandomForestClassifier( )

voting_clf = VotingClassifier(estimators=[('lr', log_clf), ('rf', rnd_clf), ('svc', svm_clf)], voting='hard')

voting_clf.fit(X_train, y_train)

from sklearn.mertics import accuracy_score

for clf in (log_clf, rnd_clf, svm_clf, voting_clf):

clf.fit(X_train, y_train)

y_pred = clf.predict(X_test)

ptint(clf.___class___.___name___, accuracy_score(y_test, y_pred))

LogisticRegression 0.864

RandomForestClassifier 0.896

SVC 0.888

VotingClassifier 0.904

以上は、'hard' votingの例だが、'soft' votingでは、0.912のaccuracyとなる。ということで、3人寄れば文殊の知恵、かな。

機械学習は、Scikit-Learnに便利な関数がたくさん用意されており、データの前処理ができれば、誰でも簡単に使える。

Deep learningは、数あるMachine learning手法の1つである。

9.1.2 What makes deep learning special within the field of machine learning

仮にこのブームが収まっても、ディープラーニングは、様々な分野で活躍し続けるであろう。

と書かれているが、この本の発刊以降も、新しい技術が生まれ続けている。

9.1.3 How to think about deep learning

言語、画像、音の知覚、認識において、ヒトに近い、あるいは、ヒトに優る能力を持つようになった。このようなことは、10年前には考えられなかったことである。

入力データは、ベクトルで表現される。入力ベクトルを出力ベクトルに変換しているだけであるといえる。変換過程が、一様、連続、微分可能であればよい。

入力ベクトルと出力ベクトルをどう対応させるかは、ヒトが指示する。指示した通りの対応関係が満たされるまで、変換パラメータは勾配降下法によって最適化される。

最適化されたパラメータを持つモデルが、学習済みモデルとなり、入力データに対して、学習した結果を出力することができる。

最後に、deep learningのモデルがneural networkと称されていることについて、現状のモデルはneuralでもnetworkでもないとして、いくつかの呼称候補を挙げている。

9.1.4 Key enabling technologies

・Incremental algorithmic innovation, first spread over two decades (starting with backpropagation) and then ・・・

・The availability of large amounts of perceptual data, ・・・

・The availability of fast, highly parallel computation hardware at a low price, especially the GPUs produced by NVIDIA・・・

・A complex stack of software layers that makes this computational power available to humans: the CUDA language, frameworks like TensorFlow that do automatic differenciation, and Keras makes deep learning accessible to most people.

今は研究者や専門のエンジニアだけがプログラミングしているが、誰でも使えるようなものが出てくるだろう。Kerasは最初の大きな一歩だ!

9.1.5 The universal machine-learning workflow

1 Define the problem:・・・

2 Identify a way to reliably measure success on your goal. ・・・

3 Prepare the validation process that you'll use to evaluate your models. ・・・

4 Vectorize the data by tuning it into vectors and preprocessing in a way ・・・

5 Develope a first model that beats a trivial common-sense baseline, ・・・

6 Gradually refine your model architecture by tuning hyperparameters and adding regularization. ・・・

7 Be aware of validation-set overfitting when tuning hyperparameters: ・・・

私見:従来の機械学習については、A. Geronのテキストがお薦めである。

9.1.6 Key network architecture

・Vector data --- Densely connected network (Dense layers)

・Image data --- 2D convnets

・Sound data (for example, wave form) --- Either 1D convnets (prefered) or RNNs

・Text data --- Either 1D convnets (prefered) or RNNs

・Timeseries data --- Either RNNs (prefered) or 1D convnets

・Other types of sequence data ---

・Video data --- Either 3D convnets (

・Volumetric data --- 3D convnets.

DENSELY CONNECTED NETWORKS

bnary classification

model = models.Sequential( )

model.add(layers.Dense(32, activation='relu', input_shape=(num_input_features,)))

model.add(layers.Dense(32, activation='relu'))

model.add(lauers.Dense(1, activation='sigmoid'))

model.compile(optimizer='rmsprop', loss='binary_crossentropy')

CONVNETS

typical image-classification

model = models.Sequential( )

model.add(layers.SeparableConv2D(32, 3, activation='relu',

input_shape=(height, width, channels)))

model.add(layers.SeparableConv2D(64, 3, activation='relu'))

model.add(layers.MaxPooling2D(2))

model.add(layers.SeparableConv2D(64, 3, activation='relu'))

model.add(layers.SeparableConv2D(128, 3, activation='relu'))

model.add(layers.Maxpooling2D(2))

model.add(layers.SeparableConv2D(64, 3, activation='relu'))

model.add(layers.SeparableConv2D(128, 3, activation='relu'))

model.add(layers.GlobalAveragePooling2D( ))

model.add(layers.Dense(32, activation='relu'))

model.add(layers.Dense(num_classes, activation='softmax'))

model.compile(optimizer='rmsprop', loss='categorical_crossentropy')

慣れると誰でも簡単に書ける、わかりやすいコードだと思う。

RNNs

stacked RNNlayer for binary classification of vector sequences

model = models.Sequential( )

model.add(layers.LSTM(32, return_sequences=True,

input_shape=(num_timesteps, num_features)))

model.add(layers.LSTM(32, return_sequences=true))

model.add(layers,LSTM(32))

model.add(layers.Dense(num_classes, activation='sigmoid'))

model.compile(optimizer='rmsprop', loss='binary_crossentropy')

真似するだけで、複雑な計算をこなすコードを使うことができて、あとは、目的に応じてハイパーパラメータを調整すれば、誰でもそこそこの性能を出せるというもの。

感想:こんな感じで学習していると、わかった気になってしまうのだが、ブログにたくさん書いてきたように、Kaggleに参加しても、ちょっと複雑なデータ構造になると手も足も出なくなり、また、transfer learningとかfine tuningが必要になると、パラメータの取得やら、メモリーへの保存の仕方やら、極端に容量の大きなデータの扱い方やら、わからないことがいっぱい出てきて、途中で投げ出してきた。これらの課題を1つ1つクリヤ―していかないと先は無いな。

9.1.7 The space of possibilities

・Mapping vector data to vector data

-- Predictive healthcare -- Mapping patient medical records to predictions of patient outcomes

-- Behevioral targeting -- Mapping a set of website attributes with data on how long a user will spend on the website

-- Product quality control -- Mapping a set of attributes relative to an instance of a manufactured product with the probability that the product will fail by next year

・Mapping image data to vector data

-- Doctor assistant

-- Self-driving vehicle

-- Board game AI

-- Diet helper

-- Age prediction

・Mapping timeseries data to vector data

-- Weather prediction

-- Brain-computer intefaces

・Mapping text to text

-- Smart reply

-- Answering questions

-- Summarization

・Mapping images to text

-- Captioning

・Mapping text to images

-- Conditional image generation

-- Logo generation/selection

・Mapping images to images

-- Super-resolution

-- Visual depth sensing

・Mapping images and text to text

-- Visual QA

・Mapping video and text to text

-- Video QA

9.2 The limitations of deep learning

ディープラーニングには無限の可能性がある。

しかし、全く対応できないものも無限にある。

例えば、コンピュータソフトの仕様書と、それに対応するソフト(コンピュータコード)のペアをどれだけたくさん集めてディープラーニングモデルに投入しても、新たな仕様に対応するプログラムを出力することはできない。

これはほんの1例にすぎない。

論理的に考えたり、科学的手法を展開したり、長期的な計画を立てたりすることは不可能である。お手本データをどれだけ投入しても、今のディープラーニングでは実現できないことがたくさんある。

連続する1つのベクトル空間から別のベクトル空間への変換を行なっているにすぎないのである。

9.2.1 The risk of anthropomorphizing machine-learning models

世間一般の人たちは、AIと聞くと、ヒトに優る能力を備えているものが実現されていると思っている。

結果として、一般人の能力を超えていることもあるが、超えているのは結果であって、中身ではない。

それ以上に、困ったことが生じること、それを、リスクと表現している。

ディープラーニングの性能は、モデルや学習データに大きく左右される。

一般的には、学習していない文章や画像が入力されると、とんでもない出力になる。

学習していないから当然なのだが、ヒトであれば、そこまで大きな間違いはしないというレベルの間違いをしてしまうのである。

これでは、とても、intelligenceなどとは呼べないのである。

9.2.2 Local generalization vs. extreme generalization

ディープラーニングのgenerarizationと、ヒトのgenerarizationを比較。

9.2.3 Wrapping up

9.3 The future of deep learning

・Models closer to general-purpose computer programs, built on top of far richer primitives than the current differentiable layers.

・New forms of learning that make the previous point possible, allowing models to move away from differentiable transforms.

・Models that require less involvement from human engineers.

・Greater, systematic reuse of previously learned features and architechtures, such as meta-learning systems using reusable and modular program subroutines.

9.3.1 Models as programs

I expect the emergence of a crossover subfield between deep learning and program synthesis, where instead of generating programs in a general-purpose language, we'll generate neural networks (geometric data-processing flows) augmented with a rich set of algorithmic primitives, such as for loops and many others (see figure 9.5).

Figure 9.5 A learned program relying on both geometric primitives (pattern recognition, intuition) and algorithmic primitives (reasoning, search, memory)

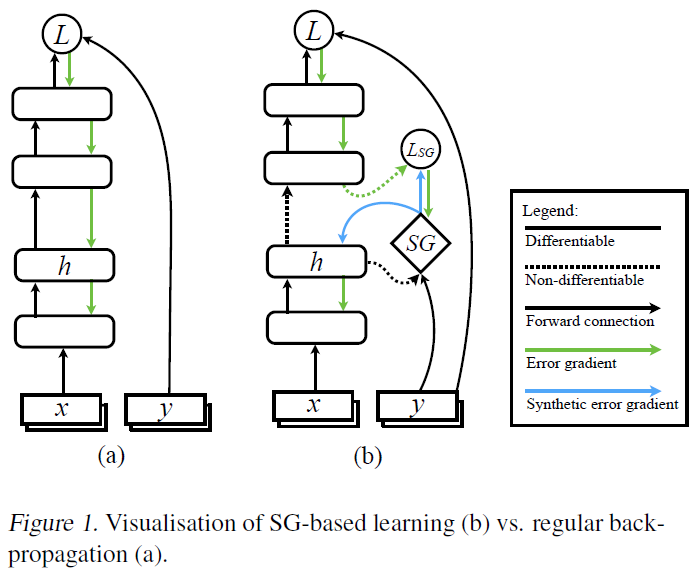

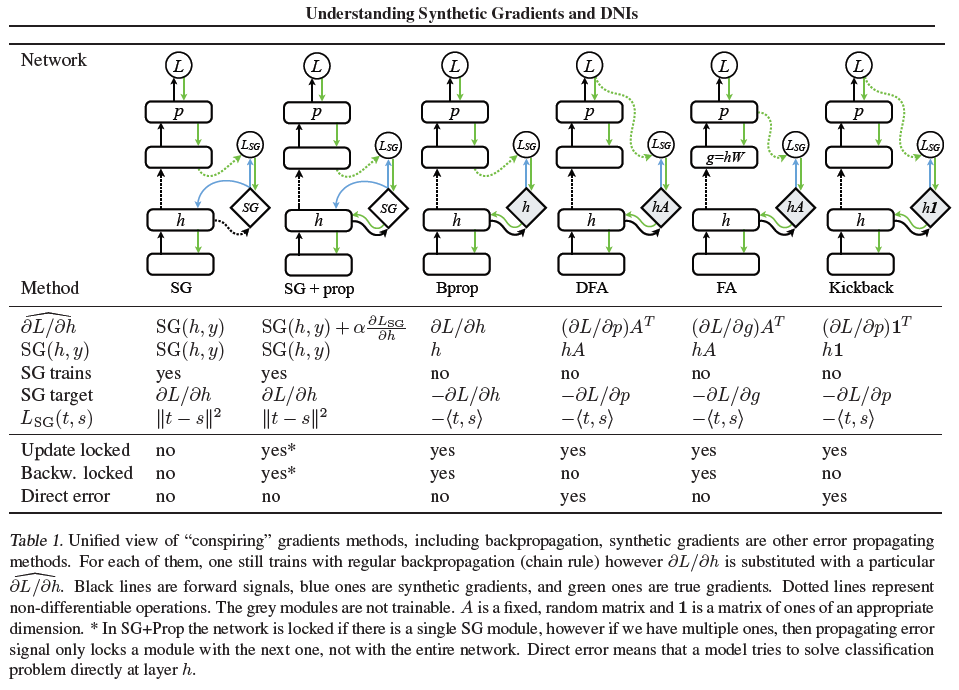

9.3.2 Beyond backpropagation and differentiable layers

次の表現が気になって、synthetic gradientsに関する文献を探してみた。

So we can make backpropagation more efficient by introducing decoupled training modules with a synchronization mechanism between them, organized in a hierarchical fashion. This strategy is somewhat reflected in DeepMind's recent work on synthetic gradients.

Understanding Synthetic Gradients and Decoupled Neural Interfaces

Wojciech Marian Czarnecki, Grzegorz Swirszcz, Max Jaderberg, Simon Osindero, Oriol Vinyals and Koray Kavukcuoglu

1DeepMind, London, United Kingdom. .

Proceedings of the 34 th International Conference on Machine Learning, Sydney, Australia, PMLR 70, 2017.

backpropagationの先にあるらしいsynthetic gradients:SGとはどのようなものなのか、

Figure 1を見ると、SGが、微分不可能でbackpropagationが適用できない場合の対処方法であるらしい、ということはなんとなくわかる。

凡人がフォローできるレベルではなさそうだな。

でも、明日、ダメもとで、フォローしてみるかな。

9.3.3 Automated machine learning

9.3.4 Lifelong learning and modular subroutine reuse

When the system finds itself developing similar program subroutines for several different tasks, it can come up with an abstract, reusable version of the subroutine and store it in the global library (see figure 9.6). Such a process will implement abstraction: a necessary component for achieving extreme generalization. A subroutine that's useful across different tasks and domains can be said to abstract some aspect of problem solving. This definition of abstraction is similar to the notion of abstraction in software engineering. These subroutines can be either geometric (deep-learning modules with pretrained representations) or argorithmic (closer to the liblaries that contemporary software engineers manipulate).

Figure 9.6 A meta-learner capable of quickly developing task-specific models using reusable primitives (both algorithmic and geometric), thus achieving extreme generalization.

meta-learningで検索すると、次のような総説があった。

Meta-Learning in Neural Networks: A Survey

Timothy Hospedales, Antreas Antoniou, Paul Micaelli, Amos Storkey

arXiv:2004.05439v1 [cs.LG] 11 Apr 2020

Abstract—The field of meta-learning, or learning-to-learn, has seen a dramatic rise in interest in recent years. Contrary to conventional approaches to AI where a given task is solved from scratch using a fixed learning algorithm, meta-learning aims to improve the learning algorithm itself, given the experience of multiple learning episodes. This paradigm provides an opportunity to tackle many of the conventional challenges of deep learning, including data and computation bottlenecks, as well as the fundamental

issue of generalization. In this survey we describe the contemporary meta-learning landscape. We first discuss definitions of meta-learning and position it with respect to related fields, such as transfer learning, multi-task learning, and hyperparameter

optimization. We then propose a new taxonomy that provides a more comprehensive breakdown of the space of meta-learning methods today. We survey promising applications and successes of meta-learning including few-shot learning, reinforcement learning and architecture search. Finally, we discuss outstanding challenges and promising areas for future research.

9.3.5 The long-term vision

関係ありそうな論文

Turning 30: New Ideas in Inductive Logic Programming

Andrew Cropper, Sebastijan Dumanˇci´c, and Stephen H. Muggleton

arXiv:2002.11002v4 [cs.AI] 22 Apr 2020

Abstract

Common criticisms of state-of-the-art machine learning include poor generalisation, a lack of interpretability, and a need for large amounts of training data. We survey recent work in inductive logic programming (ILP), a form of machine learning that induces logic programs from data, which has shown promise at addressing these limitations. We focus on new methods for learning recursive programs that generalise from few examples, a shift from using hand-crafted background knowledge to learning background knowledge, and the use of different technologies, notably answer set programming and neural networks. As ILP approaches 30, we also discuss directions for future research.

<個人的試行錯誤>

画像認識のディープラーニングにおいて圧倒的に不足しているのは、情報の質と量だ。

reasoningであれ、abstractionであれ、対象物に関する情報が少ないと、対象物に対して論理的に考えたり、抽象化したり、分類したり、統合したりすることは不可能である。

物体認識において、ヒトは、物体の大きさ、重さ、パーツ、生物か工作物か、地面に平行か垂直か、待機中か水中か、静物か運動体か、などなど、物体に関するさまざまな情報を持っているからこそ、さまざまな判断ができるのである。

画像から得られる情報は限られている。現状のディープラーニングでは、たとえば、船や飛行機の映像から、玩具か実物かを見分けることすら難しい。それを可能にするためには、玩具と実物の画像をラベルを付けて大量に与える必要があり、かつ、玩具の特徴、実物の特徴がしっかり映り込んだ画像でなければならない。

ヒトは、時間を含む3次元空間において、対象物を認識する。さらに、ヒトは成長に伴って、対象物についての情報を知識として獲得していく。

アルファゼロがひたら対戦を繰り返すことで強くなるということを、ARCの課題達成に応用できないだろうか。対戦を繰り返すたびに新たな局面を迎え、終局があり、獲得領域が対戦相手よりも多いことが成果である。ゲームの進め方にはルールがある。

ARCは、入力グリッドと出力グリッドの関係が一義的に決まるように例題が与えられる。数組の例題が与えられ、例題の中にルールが潜んでいる。ルールを読み取ることが、ARCをこれまでにないものにしている。

プログラムに、ある能力を持たせることについて考える。

機械学習もディープラーニングも様々な能力をプログラムに与えている。

明示的にプログラムしなくても、ディープラーニングは、データを入力するだけで何でも学習するということが強調されているが、四則演算はもとより、テンソル演算や確率統計などが含まれている。

機械学習では、sklearnに、SVC、Logisticregression、RandomForestなどが命令1つで実行できるようになっている。

ARCの課題に対して、ヒトの知能テストを引き合いに持ち出して、ヒトが知能テストに対峙するときの条件(状況)をプログラムにも要求する(可否はともかく、基準にする)ということを考えていたが、それは不要ではないかと思うようになった。その理由は、ヒトが知能テストに対峙するのと同等の条件を満たしたプログラムが開発できたとしても、それでAIが進歩するとは思えなくなってきたからである。我々が欲しいのは、知能テストをヒトが知能テストを受けるような条件で解けるプログラムではなく、自律的に思考することによって課題を解くことができるプログラムである。その思考プロセスには、人類のこれまでの知識・経験・知恵のすべてが含まれていることが望まれる。

論理に飛躍がある。"The Measure of Intelligence"を理解できていない。Ⅱ.2 Defining intelligence: a formal systhesisに戻って、理解しよう!

昨日(5月2日)、F. Chollet氏の最近の著作を探していたら次のような特集が見つかった。

Journal of Artificial General Intelligence

Special Issue “On Defining Artificial Intelligence”

—Commentaries and Author’s Response

Volume 11, Issue 2

February 2020

DOI: 10.2478/jagi-2020-0003

Editors: Dagmar Monett, Colin W. P. Lewis, and Kristinn R. Th´orisson

AIの定義をめぐる最新の議論のようで、Pei Wang氏の研究成果を中心に議論が展開されている。

Pei Wang’s paper titled “On Defining Artificial Intelligence” was published in a special issue of the Journal of Artificial General Intelligence (JAGI) in December of last year (Wang, 2019). Wang has been at the forefront of AGI research for over two decades. His non-axiomatic approach to reasoning has stood as a singular example of what may lie beyond narrow AI, garnering interest from NASA and Cisco, among others. We consider his article one of the strongest attempts, since the beginning of the field, to address the long-standing lack of consensus for how to define the field and topic of artificial intelligence (AI). In the recent AGISI survey on defining intelligence (Monett and Lewis, 2018), Pei Wang’s definition,

The essence of intelligence is the principle of adapting to the environment while working with insufficient knowledge and resources. Accordingly, an intelligent system should rely on finite processing capacity, work in real time, open to unexpected tasks, and learn from experience. This working definition interprets “intelligence” as a form of “relative rationality” (Wang, 2008),

was the most agreed-upon definition of artificial intelligence with more than 58.6% of positive (“strongly agree” or “agree”) agreement by the respondents (N=567). Due to the greatly increased public interest in the subject, and a sustained lack of consensus on definitions for AI, the editors of the Journal of Artificial General Intelligence decided to organize a special issue dedicated to its definition, using the target-commentaries-response format. The goal

of this special issue of the JAGI is to present the commentaries to (Wang, 2019) that were received together with the response by Pei Wang to them.

この特集号において、F. Chollet氏は次の記事を書いている。

Chollet, F. 2020. A Definition of Intelligence for the Real World? Journal of Artificial General Intelligence 11(2):27–30.

Chollet氏は、Wang氏の言説の1部を肯定し、1部を否定しておられるが、Wang氏の反論は、曖昧であるように思う。定量的に測ることのできない言葉を用いた表現の間の差異は、数式の証明のようにはいかないのは当然であるにしても、指摘はあたらない、別の論文で詳しく説明している、という反論の仕方は納得できないものがある。レベルの高い言説について意見できる立場にはないが。